UX/UI Support During Development and After Product Launch

- Neuron

- 3 days ago

- 8 min read

Explore how UX/UI support bridges the gap between design and reality

That checkout flow you spent three months perfecting? By the time it shipped, developers had tweaked it seventeen times without asking anyone. Now, customer support fields the same complaint twice a day. The problem isn't bad design. It's that the UX/UI support ended the moment the wireframes got approved.

Key Takeaways:

UX/UI support during development catches implementation drift before small compromises snowball into expensive fixes

Post-launch design involvement turns user behavior data into targeted improvements you can actually measure

Continuous design QA keeps your product looking and feeling consistent across every update

Without feedback loops connecting research to implementation, learnings just evaporate

Design systems fragment fast when nobody maintains them

Why Design Teams Vanish at the Worst Possible Moment

Budgets tighten. Timelines compress. So when wireframes get that final approval stamp, design resources typically jump to the next shiny project. Makes sense financially, right? Wrong.

About three weeks into any ambitious build, the cracks start showing. Developers hit edge cases nobody anticipated. Mobile breakpoints reveal layout disasters on iPad Mini screens that never got tested. That third-party Stripe integration? It throws up modal dialogs that clash horribly with your existing navigation patterns.

Without designers around, engineering teams improvise. They pick speed over certainty because sprint deadlines don't wait for Figma consultations. One button gets tweaked here. Spacing adjusts there. An error message gets written by someone who's never talked to an actual user.

Six months later? Your interface feels like it was designed by committee. Because it basically was.

UX/UI Support During Development Makes Your Design Actually Ship

Here's what nobody tells you about mockups. They lie. Static designs can't capture every scenario. Real code exposes timing quirks, performance bottlenecks, and platform-specific weirdness that prototypes completely miss.

Catching Drift Before It Compounds

Design QA during development serves a totally different purpose than traditional testing. Engineers verify that the code works. Designers verify that the experience feels right. Both matter. Treat them as interchangeable, and you'll regret it.

What does effective UX/UI support during development actually look like? Regular build reviews where designers evaluate real implementations against original specs. Sounds boring. Saves fortunes.

A misaligned button takes minutes to fix during development. That same button discovered three months post-launch? Now you're looking at deployment cycles, regression testing, and coordination overhead that multiplies the original effort many times over.

Common issues that development-phase reviews catch:

Interaction timing problems where animations lag, or transitions feel janky compared to those smooth Principle prototypes

Responsive breakpoint disasters causing layout chaos on tablet sizes nobody remembered to test

Empty states. The mockups assumed everything would always have data. Real users see blank screens and wonder if something broke.

Typography rendering surprises since design tools and browsers interpret fonts differently

Spacing creep. Your design system specifies 16px margins, but production shows 12px here, 20px there, and nobody can pinpoint when it happened

Sprint demos work great for agile teams. Feature-complete checkpoints suit waterfall shops. Honestly, the rhythm matters less than actually showing up consistently.

Documenting Answers Before Everyone Forgets the Questions

Development surfaces questions your documentation never anticipated. What happens when someone pastes 10,000 characters into a field expecting 500? How should loading states behave on a flaky 3G connection? Where do error messages stack when three validation failures hit simultaneously?

Designers who stay engaged can answer these immediately and write them down for future reference. That real-time documentation prevents your team from solving the same problems over and over. Professional product strategy consulting tackles these needs head-on, helping organizations build actual institutional knowledge instead of relying on "ask Sarah, she remembers."

After Launch Everything Changes

Products don't finish at launch. They start. User behavior in production reveals patterns that no amount of internal testing can replicate. How people actually navigate differs from your assumptions. Sometimes wildly.

Reading Your Analytics Like a Designer

Analytics tell stories. But those stories need interpretation from people who understand why design decisions were made.

Picture this scenario. A B2B procurement platform notices 34% abandonment on its vendor approval form. The analytics team's recommendation? Shorten the form. Obvious, right?

A designer looking at the same data spots something different. Users aren't abandoning because the form feels long. They're bailing at one specific field asking for "procurement category codes" without explaining what those codes mean or where to find them.

Two hours of work. Add a tooltip with example codes. Abandonment drops to 12%.

A shorter form would've removed fields people actually needed and created downstream chaos. The designer's context made all the difference.

UX/UI support after product launch turns analytics from passive dashboards into active improvement engines. Teams without design involvement post-launch tend to address symptoms instead of causes.

Mining Gold From Support Tickets

Support tickets contain treasure. Every complaint represents someone who cared enough to communicate their frustration instead of just leaving. Most unhappy users ghost you silently. The ones who write in? They're doing you a favor.

Designers reviewing support patterns spot recurring themes that individual tickets hide. One SaaS team noticed 23 tickets mentioning "export." Individually, each described a different frustration—slow downloads, missing columns, confusing file names. A designer mapped them together and found a single root cause: the export button appeared before data finished loading, so users clicked prematurely and got incomplete files. One fix. Twenty-three complaints resolved.

Without design eyes on this feedback, product teams build elaborate solutions to problems that need minimal fixes.

UX/UI support after product launch includes building feedback categorization systems that prioritize based on real user impact, implementation complexity, and strategic alignment.

Feedback Loops That Actually Close

Information flows easily from development and production back to design. When systems exist to facilitate that flow. Without intentional structure, that knowledge just vanishes.

User research generates recommendations. Recommendations enter backlogs. Backlogs get reshuffled. Months pass. Context evaporates. That carefully documented finding either gets implemented incorrectly or deprioritized into oblivion. Sound familiar?

Continuous UX/UI support maintains the thread between research findings and eventual implementation. What does this look like in practice? Designers attending sprint planning to flag when a ticket contradicts earlier research. A shared document linking each backlog item to the user problem it solves. Monthly syncs where designers review what shipped and compare it against the original intent.

The mechanics matter less than the habit. Someone with design context needs visibility into what's actually getting built. For B2B products especially, this prevents the slow drift toward feature soup—where products accumulate capabilities that technically work but collectively confuse everyone.

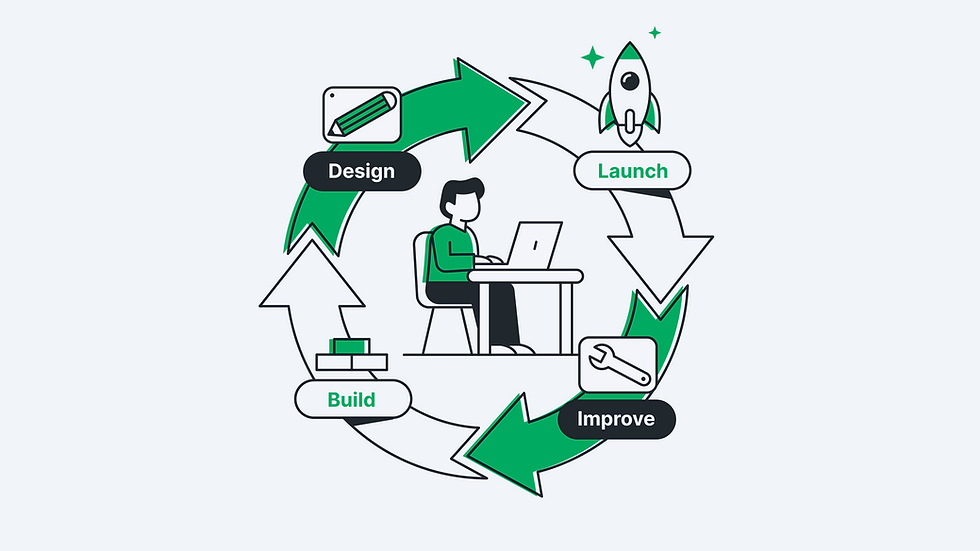

Launch generates data. Data suggests hypotheses. Testing produces new data. This cycle drives improvement when design resources stick around to participate. Organizations investing in DesignOps services create the scaffolding for sustained iteration. DesignOps establishes workflows, tools, and standards enabling ongoing design involvement without burning people out. Consistent improvement beats sporadic heroics every time.

What Each Support Phase Looks Like

Support Phase | Primary Focus | Typical Activities | Success Metrics |

During Development | Implementation fidelity | Build reviews, edge case docs, design QA, and developer pairing | Spec adherence, fewer post-launch fixes |

Post-Launch first 90 days | Initial optimization | Analytics deep-dives, user feedback triage, critical bug fixes | Task completion rates, early retention |

Ongoing Maintenance | Continuous improvement | Design system updates, quarterly audits, incremental refinements | Long-term retention, support ticket reduction |

The first 90 days post-launch typically require the heaviest design involvement. User behavior data floods in, revealing gaps between assumptions and reality. After that initial push, ongoing maintenance settles into a steadier rhythm.

Keeping Design Systems From Falling Apart

Products grow. Features multiply. Teams expand. Without active maintenance, design systems fragment fast. Components drift. Patterns diverge. That coherent experience users first encountered becomes a patchwork of inconsistent interactions.

One engineer extends your button component with a spinner overlay for loading states. Another engineer on a different squad creates a completely separate LoadingButton component for similar functionality. Both solutions work technically. Neither maintains system integrity.

UX/UI support during development includes design system governance. Someone needs ownership over reviewing new patterns, documenting extensions, and deprecating outdated approaches. Not full-time work. But consistent work.

Quarterly audits catch drift before it compounds. Monthly component reviews ensure additions align with existing principles. These rhythms create accountability without overwhelming already-stretched teams.

Helping New Hires Actually Learn the System

Scaling organizations, onboarding designers and developers constantly. Each new hire learns your design system through some mix of documentation, example code, and "go ask Marcus."

Well-maintained systems lean heavily on documentation. Poorly maintained ones depend on institutional memory, which vanishes when people leave or get too busy for questions. Ongoing design support keeps docs current and flags when production code drifts from documented patterns.

Proving the ROI on Continuous Design

Skeptics see post-launch design involvement as a cost without obvious output. Development work produces countable features. Design work doesn't fit that mold.

But measurement works fine if you track the right things. Task completion rates. Time-to-value for new users. Support ticket volume for specific features. Conversion rates at critical decision points. Improvements here directly reflect design impact.

Retention rates reveal even more. Users who have frustrating experiences leave. Design improvements reducing friction boost continued engagement. Keeping one customer costs far less than acquiring a new one, and good design keeps more customers around.

Some design contributions prevent problems rather than fix them. Harder to measure. Equally valuable. A designer catching a usability issue during development saves the entire cost of post-launch discovery, prioritization, scheduling, implementation, testing, and deployment. The exact multiplier varies by organization, but the direction holds universally. Later always costs more than earlier.

When Outside Help Makes Sense

Internal design teams face capacity constraints. Product launches consume attention. New initiatives compete for resources. Supporting shipped products often loses priority—not because it lacks value, but because new work feels more urgent.

External UX/UI support fills gaps without permanent headcount increases. Professional UX/UI design services offer structured engagement models aligning with product lifecycles. Development-phase support follows different patterns than post-launch optimization, and recognizing those distinctions helps you pick the right partnership structure.

Whatever approach you choose, start with three commitments. Design representation in sprint reviews or equivalent checkpoints. Monthly design system maintenance sessions. Quarterly product experience audits examining live applications against design standards and user feedback. A few hours weekly, distributed appropriately, maintains quality across development and post-launch phases.

The Competitive Reality

Your competitors ship products too. The difference shows up eighteen months later when their interfaces feel like archaeological dig sites—layer upon layer of improvised fixes—while yours still feels intentional. That gap compounds quietly. Eventually, switching costs become the only thing keeping their users around. Yours stay because they want to.

Ready to establish continuous UX/UI support for your products? Our structured approach connects design excellence to business outcomes throughout product lifecycles. Contact us to discuss how an ongoing design partnership can strengthen your digital products.

FAQs

How much UX/UI support do products typically need after launch?

It depends heavily on product complexity, user volume, and growth stage. Some mature products need just a few hours weekly for maintenance. High-growth applications or those undergoing major iterations might need dedicated part-time design resources. Start by tracking where design questions actually arise and scale from there.

What's the difference between design QA and traditional QA?

Traditional QA asks "Does it work?" Design QA asks, "Does it feel right?" Both examine the same builds with completely different success criteria.

Should the same designers provide ongoing support?

Original designers remember why that weird edge case got handled that way. New designers spot the workaround everyone stopped questioning three sprints ago. Best setup? Pair them. The veteran explains context, the newcomer challenges assumptions.

How do you measure ROI on post-launch design support?

Track task completion rates, time-on-task, conversion rates, and support ticket volume. Compare before and after design interventions. The timeline for seeing results varies by what you're measuring. Quick fixes like clearer labels show impact within weeks. Larger UX overhauls take longer to reflect in retention numbers.

When does external support make more sense than building internal capacity?

Hire external when you need surge capacity for a big launch, specialized skills your team lacks, or an honest assessment of a product everyone internal has grown blind to. Build internally when your product requires deep domain expertise that takes years to develop. Most B2B companies end up doing both—internal team owns the day-to-day, external partners handle audits and overflow.

About Us

Neuron is a San Francisco–based UX/UI design agency specializing in product strategy, user experience design, and DesignOps consulting. We help enterprises elevate digital products and streamline processes.

With nearly a decade of experience in SaaS, healthcare, AI, finance, and logistics, we partner with businesses to improve functionality, usability, and execution, crafting solutions that drive growth, enhance efficiency, and deliver lasting value.

Want to learn more about what we do or how we approach UX design? Reach out to our team or browse our knowledge base for UX/UI tips.